Papers

Clayton, M., Jakubowski, K., Eerola, T., Keller, P. E., Camurri, A., Volpe, G., & Alborno, P. (2020). Interpersonal Entrainment in Music Performance: Theory, Method, and Model. Music Perception: An Interdisciplinary Journal, 38(2), 136-194.

Summary: This article comprises an extensive theoretical review of literature and methods for measuring interpersonal synchronisation and coordination in music ensembles, the first cross-cultural comparisons between interpersonal entrainment in natural musical performances, and a conceptual model describing the physical, psychological, and social processes implicated in musical entrainment at different timescales.

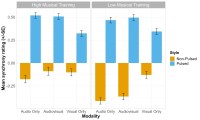

Jakubowski, K., Eerola, T., Blackwood Ximenes, A., Ma, W.K., Clayton, M. & Keller, P.E. (2020). Multimodal perception of interpersonal synchrony: Evidence from global and continuous ratings of improvised musical duo performances. Psychomusicology: Music, Mind, and Brain.

Summary: This paper examines audience judgements of interpersonal synchrony in musical duo performances via global (retrospective) ratings of 10-second excerpts and continuous (on-line) ratings of longer excerpts (~ 30 seconds to 1 minute). Participants perceived standard jazz improvisations featuring a regular beat as significantly more synchronous than free improvisations that aimed to eschew the induction of such a beat. However, ratings of perceived synchrony were more similar across these two styles when only the visual information from the performance was available, suggesting that performers’ bodily cues functioned similarly to communicate and coordinate musical intentions. Computational analysis of the audio and visual aspects of the performances indicated that synchrony ratings increased with increases in audio event density and when co-performers engaged in periodic movements at similar frequencies, and the salience of visual information increased when synchrony ratings were made continuously over longer timescales. These studies reveal new insights about the correspondence between objective and subjective measures of synchrony and contribute methodological advances indicating both parallels and divergences between the results obtained in paradigms utilising global versus continuous ratings of musical synchrony.

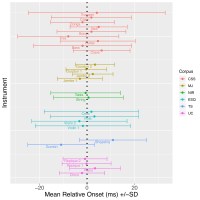

Clayton, M., Jakubowski, K. & Eerola, T. (2019). Interpersonal entrainment in Indian instrumental music performance: Synchronization and movement coordination relate to tempo, dynamics, metrical and cadential structure. Musicae Scientiae.

Summary: This paper represents the most extensive quantitative investigation of interpersonal entrainment based on natural performance data to date. We analysed note-to-note synchronisation using audio data and longer-term shared periodic movement using computer vision techniques from four North Indian classical duos playing 10 pieces in over two hours of recorded music. Synchronisation varied significantly in relation to tempo, event density, peak levels and leadership, while movement coordination was greater at metrical boundaries than elsewhere, and was most strikingly greater at cadential than at other metrical downbeats. These findings complement previous ethnographic investigations of this genre, and the methods developed here can be easily modified and extended to investigate interpersonal entrainment in other musical styles.

Eerola, T., Jakubowski, K., Moran, N., Keller, P.E., & Clayton, M. (2018). Shared periodic performer movements coordinate interactions in duo improvisations. Royal Society Open Science, 5, 171520.

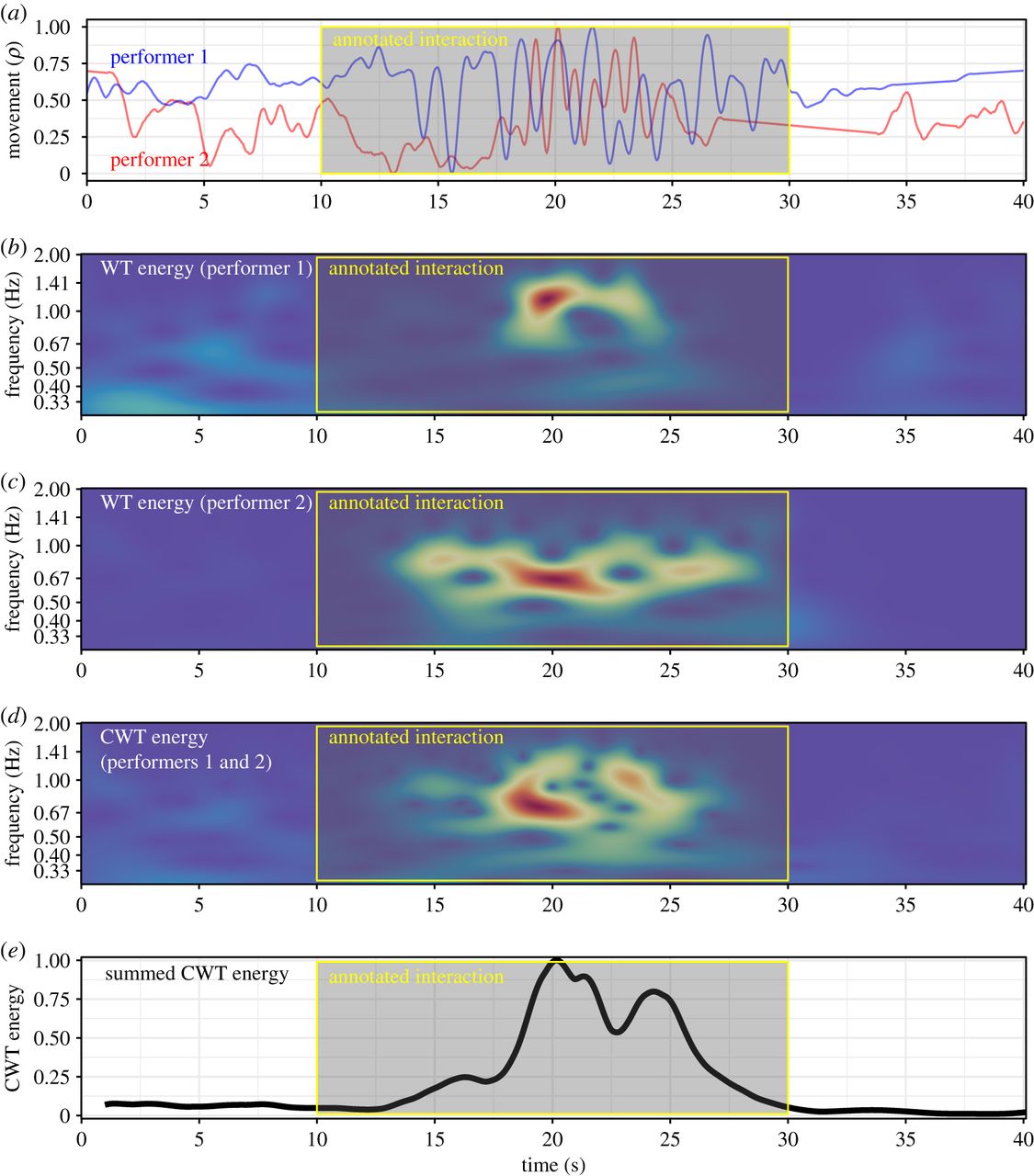

Summary: We made use of two improvising duo datasets—(i) performances of a jazz standard with a regular pulse and (ii) non-pulsed, free improvisations—to investigate whether human judgements of moments of interaction between co-performers are influenced by body movement coordination at multiple timescales. Bouts of interaction in the performances were manually annotated by experts and the performers’ movements were quantified using computer vision techniques. The annotated interaction bouts were then predicted using several quantitative movement and audio features. Over 80% of the interaction bouts were successfully predicted by a broadband measure of the energy of the cross-wavelet transform of the co-performers’ movements in non-pulsed duos. A more complex model, with multiple predictors that captured more specific, interacting features of the movements, was needed to explain a significant amount of variance in the pulsed duos. The methods developed here have key implications for future work on measuring visual coordination in musical ensemble performances, and can be easily adapted to other musical contexts, ensemble types and traditions.

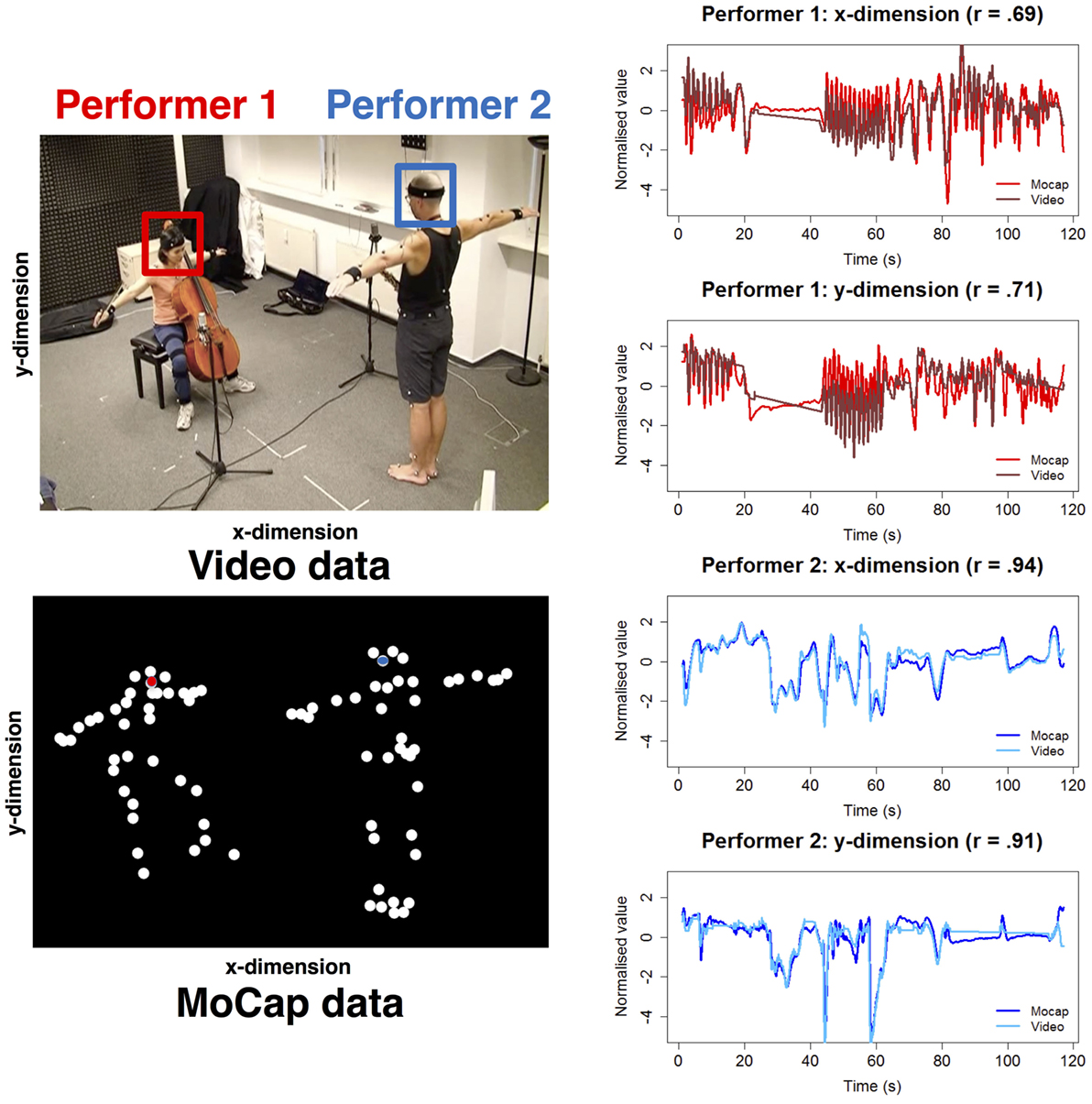

Jakubowski, K., Eerola, T., Alborno, P., Volpe, G., Camurri, A., & Clayton, M. (2017). Extracting coarse body movements from video in music performance: A comparison of automated computer vision techniques with motion capture data. Frontiers in Digital Humanities, 4, 9.

Summary: Three computer vision techniques were implemented to measure musicians’ movements from videos and validated against motion capture (MoCap) data from the same performances. Overall, all three computer vision techniques exhibited high correlations with MoCap data (median Pearson’s r values of 0.75–0.94); the best performance was achieved using two-dimensional tracking techniques and when the to-be-tracked region of interest was more narrowly defined (e.g. tracking the head as opposed to the full upper body).

Research Tools

We have developed a set of user-friendly tools for quantifying and tracking movement from videos using EyesWeb (as reported in Jakubowski et al., 2017 above). These have been documented and made freely available for use by other researchers on GitHub at:

https://github.com/paoloalborno/Video-Tools