As described in Part I (What is an impact of an article?), there are several ways of assessing the merits of an article. Article-level metrics avoid some of the problems associated with the publication forums and the costs involved in expert evaluations, but these do introduce other dilemmas worth discussing.

An article may sometimes be cited for reasons that might not suggest the article itself is novel, rigorous or significant. For instance, controversial findings, even if later refuted, will get attention and therefore citations. Also, papers offering new methods and materials, although they might just be the technical descriptions of the contents, might be cited frequently even though they have not perhaps delivered a major impact to the field. I suspect one of my most cited outputs, the Midi Toolbox is such a technical tool [210 citations], which has not really delivered many original insights by itself.

Perhaps the way to think about these is that citation-based indices cannot be fully adequate measures, and they can even be harmful and misleading when assessing individuals, but we do need quick heuristics to orient ourselves when navigating complex research proposals and evaluating organisations and groups. So the question is, could article-level metrics provide a sufficient compromise between efficacy and effort for capturing the quality of a group or unit?

Article-level metrics is the basic unit of analysis

I suspect that the article-level metrics will eventually turn out to be the most cost-effective yardstick. Why? Well, do we even read journals anymore? They seem to have gone the same way as albums in music and therefore the publication forum does not necessarily carry the same eminence anymore. Articles are also becoming increasingly available in Open Access repositories and people do read them despite never seeing the full journal, let alone having access to it. One might argue that the quality of the journal does not come from the readership but the editorial principles upheld at the journal by the editor, action editors and ultimately by the reviewers, which provide the uniform quality control and evaluation service for the scholarship, which give the articles their reputation and trustworthiness. Even when this is true, the articles themselves should be the unit of analysis rather than the forum in which they are published. Article-level metrics aggregate the data at the lowest possible level and therefore could be less susceptible to systematic efforts of biasing them.

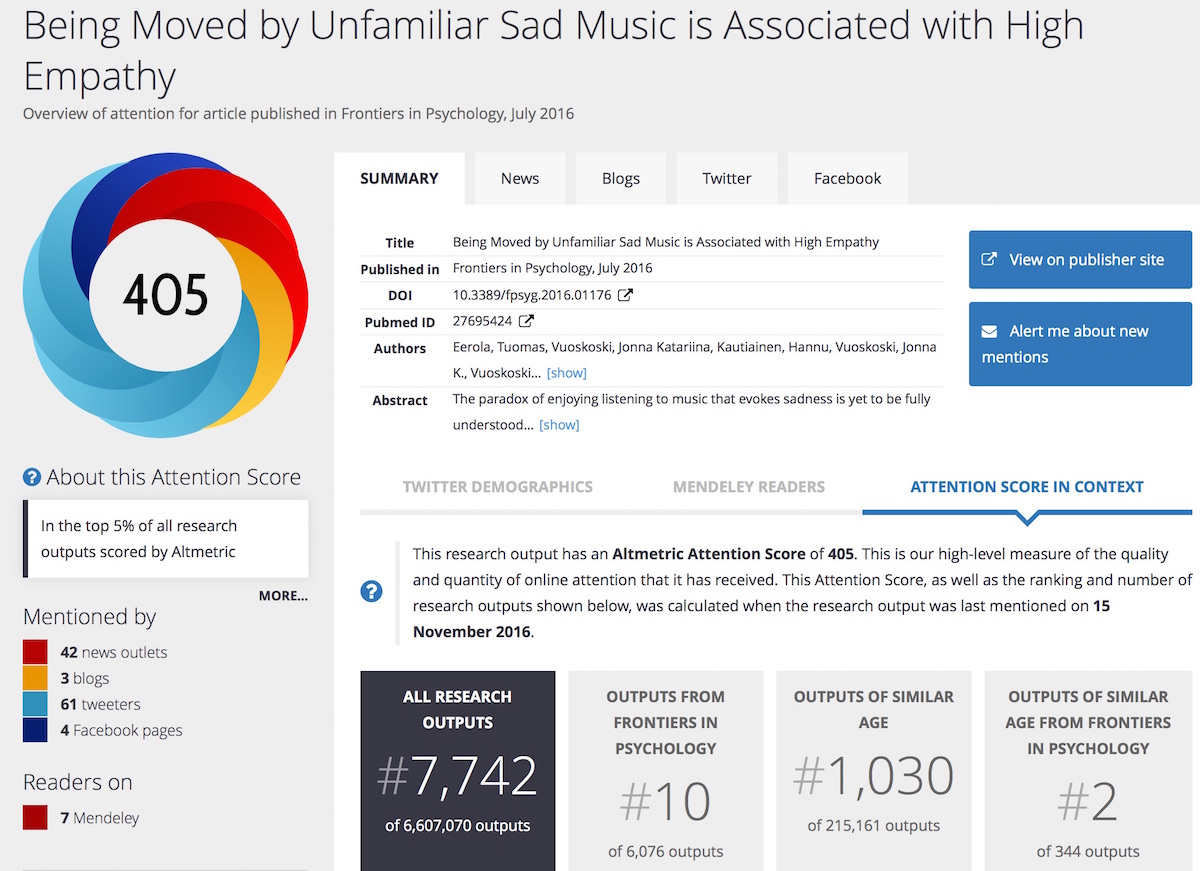

If article-level metrics do become essential elements in assessing scholarly outputs, what should be measured from the articles? The counts of the article reads (abstracts, full paper downloads, bookmarks in services such as Mendeley, CiteULike, ResearchGate or Academia)? The social media buzz around the article? What does it actually mean if you have thousands of shares on Twitter and Facebook for an article? In our field, you have done either something controversial, newsworthy, or perhaps you are just well known and any work you release gets noticed automatically (you are the Justin Bieber or Adele of music scholarship, or at least you mention one of them in the press release, see the altmetrics summary figure below for our recent music and sadness study). More widely, these kind of indices are known as altmetrics after a manifesto by Jason Priem and others (2011), which covers other attention (tweets, shares, recommendations, discussions, news stories, etc.) over the internet (provided by Altmetrics.com or other services such as Impact Story).

At least altmetrics scores may inform you about the type of interest towards the article, but may not yet be considered as a measure of scholarly quality, since most of the altmetric indices relate to media activities around the output, although the tide may slow start turning here since the impact beyond the academia may also start to carry weight in assessing the merits of the outputs. Maybe for now the safest way is to rely on the number of citations an article has received, discounting self-citations (which is another form of doping).

Despite the cynical gaming of the system involving any mechanistic measure, there is reason to be positive about these in our field. Music does attract wider attention because the subject is familiar and accessible and I suspect it helps our studies to get noticed across disciplines and journals. Some good research in the field of music gets buried under the ceaseless stream of publications but there are a few tips that could help to get your article in the attention of the readers.

5 tips to improve your chances of getting noticed

- Innovate: Do research that truly breaks new ground or changes the research landscape in your area

- Aim high: Consider publishing in high quality journals before settling into the less prestigious ones

- Go for quality over quantity: Report your findings in a single impressive article rather than in several small installments

- Enrich: Provide plenty of Supporting Information (data, music examples, videos, etc.)

- Share: Make your article known to your peers via social media, press, and good plain old ‘show and tell’

No matter how we would measure the impact of articles, the highest chart toppers in music and science would do well simply because they have been original and addressed fundamental questions. While we do not have comparable measures from the past, there is little doubt that the most influential articles have either been published in very good journals, cited numerous times, and are thought to be landmark studies (see table below showing the top ranked articles, or take a look at the bibliometric analysis of articles in Music Perception by Tirovolas & Levitin, 2011). And regarding my 5 tips, the well-known papers and books mainly resorted to tip #1. The other tips cannot alter the trajectory of mediocre ideas, but the good ones might benefit from attention to visibility.

Highly cited papers in music psychology

| Short Title | Author + Year | Citations (WoS) | IF | Journal |

| Intensely pleasurable responses to music … | Blood & Zatorre (2001) | 1900 (802) | 9.4 | PNAS |

| Emotional responses to music… | Juslin & Västfjäll (2008) | 954 (366) | 20.4 | Behavioral and Brain Sciences |

| Tracing the dynamic changes in perceived tonal organization n… | Krumhansl & Kessler (1982) | 846 (369) | 7.6 | Psychological Review |

| The rewards of music listening … | Menon & Levitin (2005) | 687 (299) | 5.4 | Neuroimage |

| Listening to Mozart enhances spatial-temporal reasoning… | Rauscher et al (1995) | 530 (166) | 2.1 | Neuroscience letters |

Citations: Google Scholar and Web of Science in brackets (October 2016).

Leave a comment